Amazon S3 Files

- Rom Irinco

- Apr 12

- 10 min read

A hands-on deep dive into AWS's new S3 Files feature — bridging the gap between object storage and POSIX-style file access for enterprise workloads.

📋 Table of Contents

🗂️What is Amazon S3 Files?

Amazon S3 has always been the backbone of enterprise data lakes, ML training pipelines, and cloud-native application storage. But for years, one fundamental friction point remained: S3 is object storage, not a file system. Applications expecting POSIX semantics — directory traversal, file locking, hierarchical paths — required either a middleware abstraction layer or a separate storage service entirely.

That changes with Amazon S3 Files (currently in preview), a new capability that exposes an S3 bucket — or a scoped prefix within one — as a fully mountable, NFS-backed file system. Any AWS compute resource (EC2, Lambda, ECS, EKS) can now mount an S3 bucket directly and access its contents as if it were a traditional shared drive, complete with file and directory semantics.

💡 Key Insight

S3 Files is not a replacement for Amazon EFS or FSx. It is a new access paradigm for S3 itself — enabling file system semantics directly against your existing S3 data without migration, duplication, or additional storage costs per GB.

⚡

High-Performance Access

NFS v4.2 protocol over TCP with large block sizes (1MB r/w) for throughput-intensive workloads.

🔗

Any Compute Resource

Natively attaches to EC2 instances, Lambda functions, ECS tasks, and EKS pods.

🪣

Bucket or Prefix Scope

Scope the file system to an entire S3 bucket or a specific prefix — ideal for multi-tenant isolation.

🔐

IAM-Native Security

Auto-provisioned IAM role per file system with least-privilege S3 access baked in from creation.

💰

No Extra Storage Cost

Data lives in S3. You pay for S3 storage and API requests — no separate file system storage charge.

🌐

Multi-Region Ready

Available in ap-southeast-2 and expanding. Works within standard S3 regional boundaries.

🏗️Why This Matters for Enterprise Architects

From an Enterprise Data Strategy perspective, S3 Files closes a meaningful architectural gap. Consider the common patterns we encounter in MAP (Migration Acceleration Program) engagements:

Legacy NFS workloads migrating to AWS have historically required a lift to EFS or FSx for Windows/ONTAP — each with its own pricing, provisioning model, and operational overhead. S3 Files offers a leaner path for workloads whose primary requirement is sequential read/write access to large files in a shared namespace, not low-latency random I/O.

ML/AI training pipelines often involve massive datasets living in S3 that must be presented to training frameworks (PyTorch, TensorFlow, Hugging Face) as a mounted file system. Previously this required S3-FUSE (s3fs, goofys, mountpoint-s3) — community tools with varying support, performance characteristics, and security postures. S3 Files is an AWS-managed, production-grade alternative.

Agentic AI workflows built on frameworks like LangGraph or the AWS Strands SDK increasingly need agents to read and write structured data stores as part of tool invocations. Mounting S3 directly as a file system gives agents a familiar, POSIX-compatible interface without custom S3 SDK tooling in every function.

⚠️ Architecture Consideration

S3 Files is optimised for throughput-heavy, large-file sequential workloads. It is not a substitute for EFS or FSx when your workload demands sub-millisecond random I/O, POSIX file locking semantics, or strong consistency for concurrent writers. Validate your I/O profile before selecting this as your primary storage tier.

⚖️S3 Files vs EFS vs FSx — When to Use What

Dimension | S3 Files | Amazon EFS | FSx for Lustre |

Underlying Storage | Amazon S3 | EFS-managed NFS | Lustre + S3 backend |

Protocol | NFS v4.2 (managed) | NFS v4.0/4.1 | Lustre parallel FS |

Latency Profile | ~ms (S3 bound) | Sub-ms to low-ms | Sub-ms (HPC grade) |

Storage Cost Model | S3 pricing only | Per GB provisioned/used | Per GB provisioned |

Ideal Workload | ML datasets, data lake access, large file I/O | General-purpose shared FS, CMS, DevOps | HPC, genomics, rendering |

Max Throughput | S3 aggregate throughput | Up to 3 GB/s (Elastic) | Hundreds of GB/s |

S3 Data Source | Native — IS S3 | Not applicable | Optional S3 import |

Multi-AZ | Inherits S3 durability | Optional Multi-AZ | Single or Multi-AZ |

Status (Apr 2026) | Preview | GA | GA |

🚀Step-by-Step: Creating Your First S3 File System

Let me walk you through exactly how I set this up in my test environment (ririnco-s3-files-test bucket in ap-southeast-2). The console experience is refreshingly streamlined.

Prerequisites

Before you begin, ensure you have:

An existing S3 bucket in a supported region

IAM permissions to create file systems and IAM roles (s3files:CreateFileSystem, iam:CreateRole)

A VPC with subnets (for mount target placement)

Security group rules allowing NFS traffic (TCP port 2049) from your compute resources

1

Navigate to the File Systems Tab

Open your S3 bucket in the AWS Console and select the new "File systems — new" tab. This is the entry point for the entire S3 Files experience and is visible only in supported regions. The getting started panel clearly illustrates the three-step workflow: create → mount → access.

2

Create the File System

Click "Create file system." You will be prompted to define the scope — either the entire bucket or a specific S3 prefix. AWS automatically provisions an IAM role (S3FilesRole_XXXXXXXX) scoped with least-privilege permissions to the chosen bucket or prefix. This role is the only identity that the NFS layer uses to interact with S3 under the hood.

3

Configure Mount Targets (VPC Placement)

S3 Files creates mount targets inside your VPC — similar to how EFS places ENIs in your subnets. Select your target VPC, subnets, and security group. For production Landing Zones, place mount targets in your private data subnets and restrict ingress with a dedicated security group allowing only your compute layer SG as a source.

4

Confirm and Wait for "Available" Status

File system creation takes approximately 60–90 seconds. Once the status shows Available (green), the file system is ready to attach. Note your file system ID — you will need it for mount commands and IAM policies.

📸Screenshot 1: The new "File systems — new" tab in the S3 bucket console (bucket: ririnco-s3-files-test). The three-step guide — Create → Mount → Access — maps directly to the NFSv4.2 architecture AWS provisions behind the scenes.

📸Screenshot 2: The file system detail view — fs-0a9a126b056ef3649 — showing the auto-provisioned IAM role (S3FilesRole_7f8790), ARN, scope (s3://ririnco-s3-files-test), and native attach options for EC2, Lambda, and ECS. Created: April 9, 2026, 21:01 UTC+12.

🔌Mounting to Compute Resources

Option A: EC2 Instance (via CloudShell or SSH)

The simplest path to validate your setup is mounting from an EC2 instance. The console provides ready-to-use mount commands pre-populated with your file system ID and DNS name.

# Step 1 — Create mount point directory

sudo mkdir -p /mnt/s3files

# Step 2 — Mount the S3 file system (NFSv4.2)

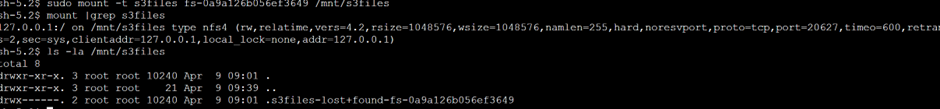

sudo mount -t s3files fs-0a9a126b056ef3649 /mnt/s3files

# Step 3 — Verify mount is active

mount | grep s3files

# Step 4 — List contents (your S3 objects appear as files/directories)

ls -la /mnt/s3files

BASH

📸Screenshot 3: Live terminal output from the EC2 mount. The mount | grep s3files output confirms an NFSv4.2 connection (nfs4, TCP port 20627, rsize=1048576, wsize=1048576 — 1MB block size for high-throughput I/O). The ls -la output shows the S3Files lost+found directory, confirming a successful mount.

✅ What the Mount Output Tells You

The NFS mount options visible in Screenshot 3 are revealing: vers=4.2, rsize=1048576, wsize=1048576 (1MB block sizes), hard mount (retries indefinitely on network errors), noresvport, and proto=tcp. This configuration is optimised for large sequential I/O — consistent with ML/data lake use cases rather than low-latency transactional workloads.

Option B: Add to /etc/fstab for Persistence

# Persistent mount across reboots — add to /etc/fstab

fs-0a9a126b056ef3649 /mnt/s3files s3files _netdev,noresvport,tls 0 0

# Validate the fstab entry without rebooting

sudo mount -a

FSTABOption C: Lambda Function (Ephemeral Mount)

# Lambda function configuration (via console or IaC)

{

"FileSystemConfigs": [

{

"Arn": "arn:aws:s3files:ap-southeast-2:ACCOUNT_ID:file-system/fs-0a9a126b056ef3649",

"LocalMountPath": "/mnt/s3files"

}

],

"VpcConfig": {

"SubnetIds": ["subnet-xxxxxxxx"],

"SecurityGroupIds": ["sg-xxxxxxxx"]

}

}

JSONOption C: ECS Task Definition

# ECS task definition — volumes block

"volumes": [

{

"name": "s3-data-volume",

"efsVolumeConfiguration": {

// Note: ECS uses the EFS-compatible attachment API for S3 Files

"fileSystemId": "fs-0a9a126b056ef3649",

"transitEncryption": "ENABLED"

}

}

],

"mountPoints": [

{

"sourceVolume": "s3-data-volume",

"containerPath": "/data",

"readOnly": false

}

]

JSON🔐Security Architecture & IAM Design

As someone with a Security-First practice anchored in NZISM, NIST 800-53, and the AWS Well-Architected Framework, the IAM model for S3 Files deserves careful scrutiny. Here is what AWS provisions and what you should review.

Auto-Provisioned IAM Role (S3FilesRole)

When you create a file system, AWS automatically provisions a role in the format S3FilesRole_XXXXXXXX. This role is the identity assumed by the S3 Files service when performing S3 API calls on behalf of your NFS clients. You should review and optionally tighten this role.

🔑 IAM Policy — Least-Privilege S3 Files Role (Recommended)

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "S3FilesReadWrite",

"Effect": "Allow",

"Action": [

"s3:GetObject",

"s3:PutObject",

"s3:DeleteObject",

"s3:ListBucket",

"s3:GetBucketLocation",

"s3:AbortMultipartUpload",

"s3:ListMultipartUploadParts"

],

"Resource": [

"arn:aws:s3:::ririnco-s3-files-test",

"arn:aws:s3:::ririnco-s3-files-test/*"

],

// Scope to VPC endpoint for data perimeter enforcement

"Condition": {

"StringEquals": {

"aws:SourceVpc": "vpc-xxxxxxxx"

}

}

}

]

}Security Hardening Checklist

🛡️ Security-First Controls — Review Before Production

The following controls should be validated for any S3 Files deployment in a production Landing Zone:

Control | Implementation | Framework Alignment |

Encryption in Transit | Enable TLS (tls mount option). S3 Files uses TLS 1.2+ by default. | NIST AC-17, NZISM 16.1 |

Encryption at Rest | Enable S3 SSE-KMS on the bucket with a customer-managed CMK. | NIST SC-28, NZISM 17.1 |

VPC Data Perimeter | Add aws:SourceVpc condition to S3FilesRole; block public S3 access. | NIST AC-4, AWS SCP |

Least-Privilege IAM | Scope S3FilesRole to specific bucket ARN; avoid s3:* wildcards. | NIST AC-6, CIS AWS 1.x |

Security Group Tightening | Restrict NFS (TCP/2049) ingress to compute SG only — no 0.0.0.0/0. | NIST SC-7, NZISM 18.3 |

CloudTrail Logging | Enable S3 data events + S3Files API logging in CloudTrail. | NIST AU-2, NZISM 6.2 |

S3 Block Public Access | Enforce all four Block Public Access settings at bucket and account level. | AWS Foundational Best Practice |

🎯Enterprise Use Cases

1. ML/AI Training Pipeline Data Access

Training jobs on EC2 P/G instances or SageMaker can mount S3 datasets directly as a file system — eliminating the aws s3 cp pre-download step that often delays training runs by 10–30 minutes for large datasets. This is particularly compelling for iterative fine-tuning workflows where training data evolves frequently.

2. Agentic AI Tool Invocations (LangGraph / Strands SDK)

Agents that need to read/write structured data (CSV exports, JSON logs, embeddings snapshots) can treat the S3-backed mount point as a standard local file path. This simplifies tool function code significantly — your agent uses open('/mnt/s3files/embeddings/chunk_001.json') rather than a custom S3 SDK wrapper.

# Example: Strands SDK agent tool reading from S3 Files mount

from strands import tool

import json

@tool

def read_knowledge_chunk(chunk_id: str) -> dict:

"""Read a knowledge chunk from the S3-backed file system."""

path = f"/mnt/s3files/knowledge/{chunk_id}.json"

with open(path, 'r') as f:

return json.load(f)

PYTHON3. Lift-and-Shift of NFS-Dependent Workloads (MAP Migrate Phase)

During MAP Migrate/Modernise engagements, applications with hardcoded NFS mount paths can be redirected to S3 Files without code changes. This is especially valuable for batch processing systems, ETL pipelines, and legacy reporting tools that expect a shared network path but whose data can reside comfortably in S3.

4. Data Lake Access for ECS Microservices

ECS task definitions can attach an S3 Files volume, allowing containerised data processing microservices to consume and produce files as if operating on a shared NAS — without embedding S3 SDK calls in every service. This is a clean separation of concerns: your application code reads files; your infrastructure configuration handles the S3 mapping.

🏗️Infrastructure as Code (Terraform)

For production Landing Zone deployments, you should never be clicking through the console. Here is a Terraform module pattern for S3 Files that aligns with the AWS Well-Architected Framework.

📝 Note on Provider Support

The aws_s3_file_system Terraform resource is expected to be available in the AWS provider v5.x+ as S3 Files moves to GA. For preview, use the AWS CLI or SDK via a null_resource / aws_cli provisioner as shown below.

# modules/s3-files/main.tf

resource "aws_s3_bucket" "data_lake" {

bucket = var.bucket_name

tags = var.tags

}

resource "aws_s3_bucket_server_side_encryption_configuration" "sse" {

bucket = aws_s3_bucket.data_lake.id

rule {

apply_server_side_encryption_by_default {

sse_algorithm = "aws:kms"

kms_master_key_id = var.kms_key_arn

}

bucket_key_enabled = true

}

}

resource "aws_s3_bucket_public_access_block" "block" {

bucket = aws_s3_bucket.data_lake.id

block_public_acls = true

block_public_policy = true

ignore_public_acls = true

restrict_public_buckets = true

}

# IAM Role for S3 Files service

resource "aws_iam_role" "s3files_role" {

name = "S3FilesRole-${var.environment}"

assume_role_policy = jsonencode({

Version = "2012-10-17"

Statement = [{

Action = "sts:AssumeRole"

Effect = "Allow"

Principal = { Service = "s3files.amazonaws.com" }

}]

})

}

resource "aws_iam_role_policy" "s3files_policy" {

name = "S3FilesLeastPrivilege"

role = aws_iam_role.s3files_role.id

policy = jsonencode({

Version = "2012-10-17"

Statement = [{

Effect = "Allow"

Action = [

"s3:GetObject", "s3:PutObject", "s3:DeleteObject",

"s3:ListBucket", "s3:GetBucketLocation",

"s3:AbortMultipartUpload", "s3:ListMultipartUploadParts"

]

Resource = [

aws_s3_bucket.data_lake.arn,

"${aws_s3_bucket.data_lake.arn}/*"

]

Condition = {

StringEquals = { "aws:SourceVpc" = var.vpc_id }

}

}]

})

}

# Security Group for S3 Files mount targets

resource "aws_security_group" "s3files_sg" {

name = "s3files-mount-target-sg"

description = "Allow NFS from compute layer only"

vpc_id = var.vpc_id

ingress {

from_port = 2049

to_port = 2049

protocol = "tcp"

security_groups = [var.compute_sg_id] # least-privilege source

}

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

}

TERRAFORM🏆Key Takeaways

After hands-on testing in ap-southeast-2, here is my architectural verdict on Amazon S3 Files:

✅

Strong Fit

ML/AI training data, data lake access from compute, legacy NFS migration, agentic AI file tool patterns.

⚠️

Evaluate Carefully

Workloads needing low-latency random I/O, POSIX locking, or multiple concurrent writers with strong consistency.

🔐

Security Posture

IAM-native, auto-provisioned roles are a welcome default — but always review, tighten, and add VPC conditions before production.

💡

Cost Advantage

No separate file system storage cost beyond S3 pricing. For data already in S3, the incremental cost to get file system access is minimal.

🚀 Final Verdict

S3 Files is a well-designed feature that fills a genuine gap in the AWS storage portfolio. For organisations with significant data assets in S3 — especially those building on AI/ML workloads or modernising NFS-dependent applications — it is absolutely worth evaluating now. The three-step console experience is genuinely polished, the NFSv4.2 mount output is exactly what you would hope to see, and the IAM-first design is commendable. Watch this space closely as it moves toward GA.

☁️

Comments